Learning from the VisionSpring project

- Jan 20

- 4 min read

Updated: Feb 11

VisionSpring had built a successful model selling reading glasses to adults in India. But when they turned their attention to children's eye care, they hit a wall.

The traditional eye screening process, the same one that worked fine for adults, was terrifying children.

As part of the design thinking process, IDEO designers prototyped solutions to a group of 15 children aged 8 to 12.

The first prototype used the standard eye screening approach. A young girl sat down for her screening and immediately started crying. The designers could have dismissed this as an outlier—maybe she was just particularly sensitive. Instead, they recognized a fundamental insight: what felt like a simple vision test to an adult represented something entirely different to a child. The pressure was too intense, the risk of failure too high.

It makes us wonder: In Singapore, would we have just asked her to 'stop crying'. Or tried to cajole her instead?

The second iteration involved asking the girl's teacher to conduct the screening with another student. This seemed logical as children trust their teachers. It failed. The child still cried. The issue wasn't who was administering the test; it was the entire power dynamic of the situation.

We wonder: In Singapore, would we have drawn the conclusion that the teacher/child was at fault?

The breakthrough came from a radical flip in that dynamic. The designers asked the girl who had cried to screen her teacher instead. Everything changed. She approached the task with serious concentration. Her classmates, rather than looking on with dread, watched enviously.

The designers had stumbled onto something profound: children didn't fear the screening itself, they feared being judged, tested, and found wanting.

The final prototype built on this insight by having children screen each other.

By framing the medical procedure as "playing doctor," the team transformed a high-stakes test into a desirable game. The children took it seriously and complied willingly.

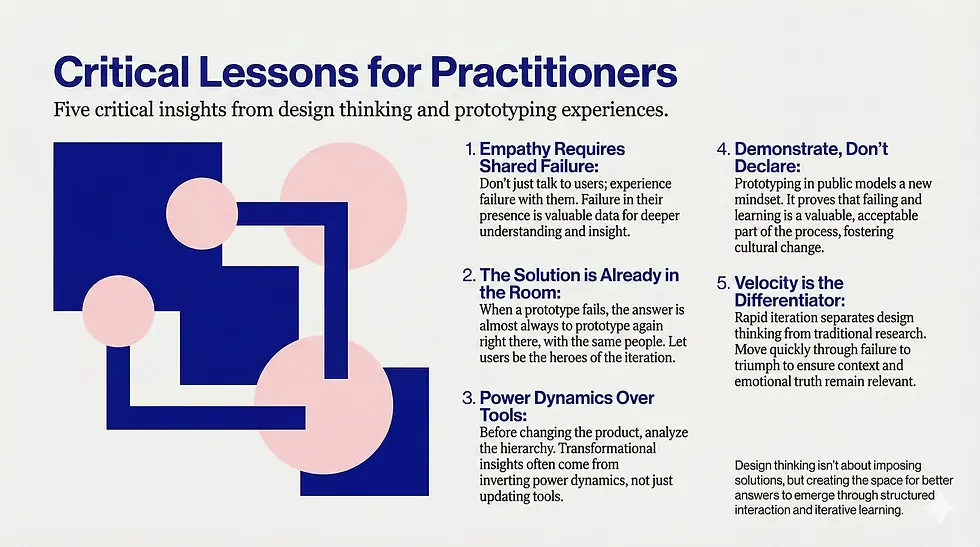

Critical lessons for practitioners

This case study offers several critical lessons that extend far beyond pediatric eye care.

First, empathy requires experiencing failure with users, not just talking to them. The IDEO designers didn't crack this through interviews or observation alone. They had to fail in front of the children, feel the discomfort of making a young girl cry, and sit with that failure long enough to understand what it meant.

We often rush past our failures or sanitize them in retrospectives. VisionSpring's story reminds us that failure in the presence of users is data—often the most valuable data we'll get.

Second, the solution was already in the room. The girl who cried during the first screening became the hero of the second iteration. The children themselves showed the designers what would work.

As practitioners, we need to resist the temptation to retreat to our studios and "fix" things when prototypes fail. The answer is almost always to prototype again, right there, with the same people who just watched us fail.

Third, power dynamics matter more than we think. The designers tried several variations before they realized the fundamental issue was about who had power in the situation.

In our own work, we should ask: Who holds power in this interaction? What would happen if we inverted it?

Sometimes the most transformational insights come from questioning hierarchies we've taken for granted.

Fourth, cultural transformation happens through demonstration, not declaration. Peter Eliassen's observation about the VisionSpring team in India is telling. Staff members were initially hesitant to make suggestions that might fail or make them "look silly" in front of supervisors.

The "playing doctor" prototype didn't just solve a user problem. It modeled a new way of working for the entire organization. It proved that failing and learning was not just acceptable but valuable.

As practitioners, we're not just designing solutions; we're modeling a mindset.

The way we prototype, the way we handle failure, the way we involve stakeholders—all of this teaches organizational culture more effectively than any workshop or presentation.

Finally, speed matters. The designers moved from tears to triumph through rapid iteration with 15 children in what appears to have been a single session. They didn't schedule follow-up research. They didn't run the findings through multiple review cycles. They prototyped, failed, learned, and prototyped again immediately.

This velocity is what separates design thinking from traditional research approaches.

We learn by doing, and we do it fast enough that the context doesn't change between iterations.

The VisionSpring case reminds us that design thinking isn't about having brilliant insights in isolation. It's about creating the conditions for insights to emerge through structured interaction with the people we're designing for.

It's about building just enough to fail, failing just enough to learn, and learning just enough to build something that actually works.

The children in that Indian classroom didn't need a better eye chart or a more qualified screener. They needed someone willing to hand them the power and watch what they did with it.

That's design thinking at its best: Not imposing solutions, but creating the space for better answers to emerge.